April 14 / 2026 / Reading Time: 4 minutes

Hollywood Has Always Run on Fragmentation. AI Is Making That Everyone's Problem.

It takes a village to make a movie. A very large, geographically distributed, loosely governed village — and most of it has no idea what the rest of it is doing with your data.

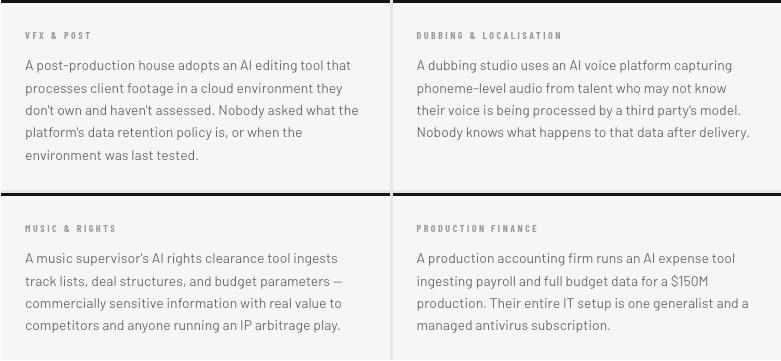

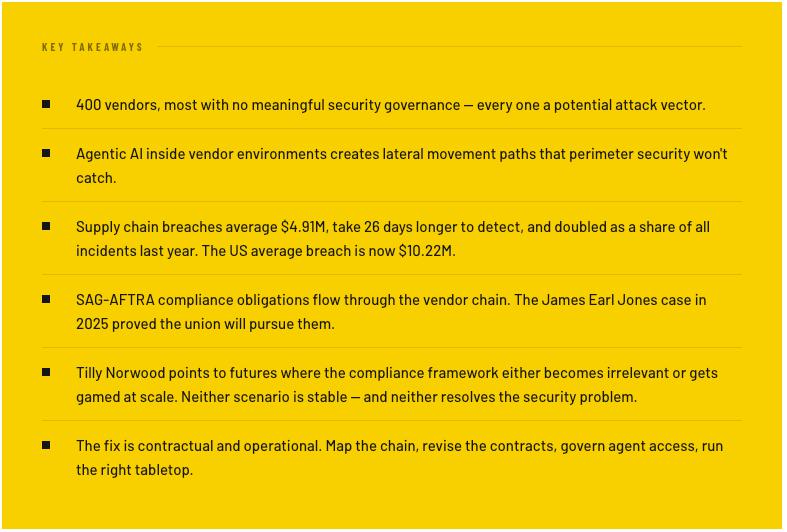

A major production routinely touches 200 to 400 separate vendors across its lifecycle. VFX houses, rendering farms, sound studios, music licensing, subtitle and localization vendors, color grading facilities, digital distribution partners — each doing one thing, doing it well, and moving on. That model is efficient and it works. But it was designed for a world where the primary supply chain risk was someone leaking a plot point to a trades journalist.

Not a world where every one of those vendors is now running AI tools, ingesting sensitive production data, and in some cases deploying autonomous agents that make decisions — and API calls — without a human in the loop.

The Structural Problem

Many of the vendors in a production chain are small. A respected VFX boutique might have 50 people. A digital dailies company — responsible for raw footage every day of a shoot — might run with eight. These companies hold access to unreleased footage, master audio tracks, talent contracts, screenplay drafts, and financial deal terms. And they get that access not through careful governance, but because the production needs them operational in 48 hours.

Credentials get shared. Offboarding is inconsistent. Storage that was "temporary" during production is never cleaned up. The project wraps, but the access doesn't always end with it. What AI has done is introduce a new, high-velocity layer of data flow on top of this already porous structure — moving faster than anyone's governance can keep up with.

None of this is theoretical. It's all happening right now. And in most cases, the studio or network at the top of the chain has no visibility into any of it.

Agents Make This Significantly Worse

Traditional AI tool usage is at least bounded. A human asks a question, a tool returns a result, a human decides. An agent is different. An AI agent deployed by a vendor to manage delivery schedules or coordinate with your asset management system needs API access, credentials, and the ability to read from and write to systems on both sides of the relationship.

An agent inside a compromised vendor environment, with API access to your systems, is a lateral movement path. It doesn't notice something's wrong. It just keeps executing its instructions.

Security researchers have documented prompt injection attacks against AI agents — where malicious content embedded in data the agent processes causes it to take unintended actions. For production environments where agents handle content from dozens of external sources daily, this is a live attack surface most security teams haven't begun to model.

And when something goes wrong, the forensic trail gets murky fast. Was it the agent? A compromised agent? A compromised vendor leveraging the agent's access? Answering that question takes time you probably don't have.

The Regulatory Exposure

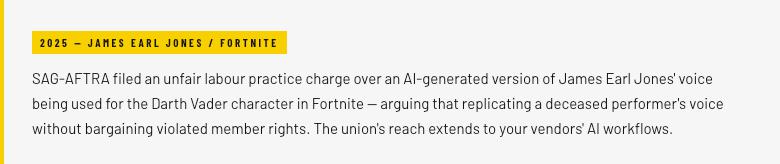

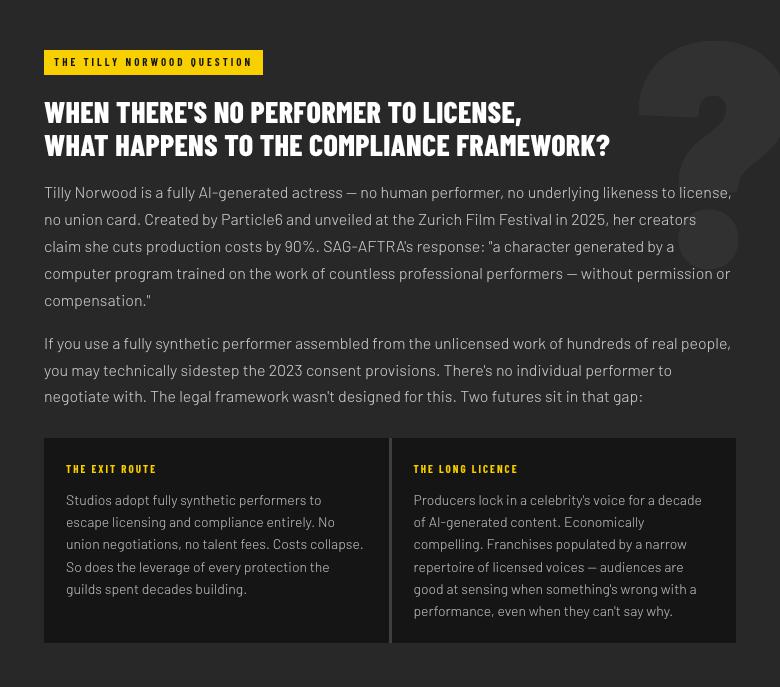

Guild obligations that flow to your vendors. The 2023 SAG-AFTRA TV/Theatrical Agreement is clear: no digital replica of a performer's voice or likeness can be used without specific, written consent and compensation. A producer cannot scan a performer, ship that data to a vendor, and assume the downstream AI tools that vendor uses fall outside the agreement's scope. The studio at the top of the chain is contractually responsible regardless of where in the chain the breach occurs.

California CCPA. Every AI tool in your vendor chain that processes crew, talent, or subscriber data involving California residents is a CCPA-relevant processing activity. Under AB 1836 (2024), unauthorized digital replicas of deceased personalities are prohibited without consent. The liability attaches at the top of the chain, not at the vendor level.

But here's what nobody in these compliance conversations wants to say out loud.

Either way, the compliance landscape is shifting fast enough that neither guild agreements nor state privacy laws are stable enough to plan around with confidence. That instability is itself a risk.

The Numbers

2× Third-party breaches doubled as a share of all incidents in a single year — now 30% of all breaches Verizon DBIR 2025

+40% More expensive to remediate than internal breaches — dual-environment forensics and 26 extra days to detect Gartner

In the United States, the average breach now costs $10.22 million — the highest of any country globally, up 9% on 2024. Those 26 extra days to detect a supply chain breach mean 26 additional days during which unreleased footage, talent data, and production financials are sitting exposed in someone else's compromised environment.

The Hurt Locker won six Academy Awards. After a pre-release leak, it made just $49 million worldwide. The Sony Pictures breach in 2014 was not primarily a piracy story — the most enduring damage was internal emails and talent data going public. "The reputational risk from leaked correspondence is harder to calculate than any box office impact," one crisis expert observed at the time, "because you have no way of knowing what's in those emails."

The AI cost savings being modeled for production are real. A single supply chain breach through one unassessed vendor running an ungoverned AI tool could exceed those savings entirely — in remediation, regulatory fines, and lost box office combined. That line item is missing from almost every AI business case in this industry right now.

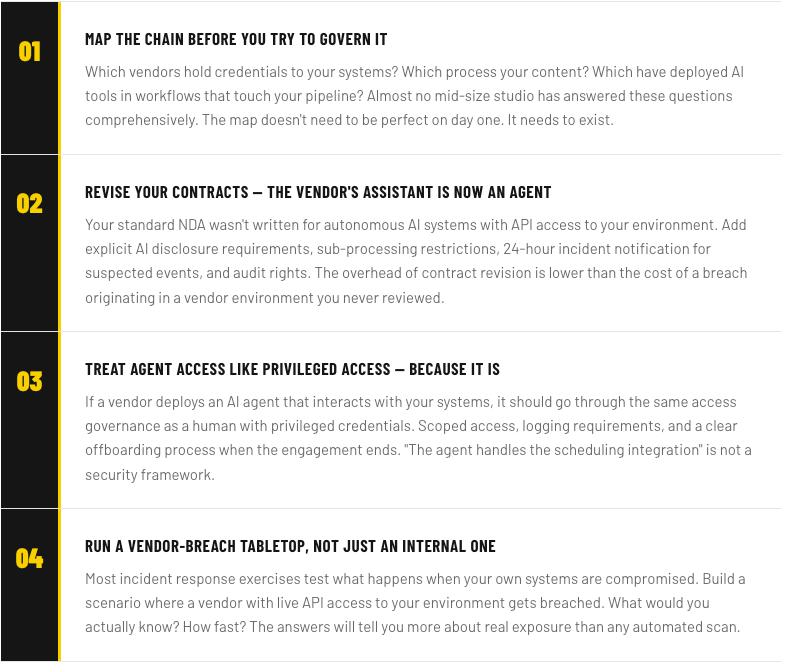

Four Things to Do Now

Want to see where your supply chain is actually exposed?

We work with media and entertainment security teams on exactly this — understanding what's actually flowing through your vendor ecosystem and where the real exposure sits. No pitch, no deck.